You’ve probably noticed the headlines lately. NVIDIA investing billions here. OpenAI shifting its CEO closer to infrastructure. Arm building its own chips. Musk getting into chip manufacturing.

At first glance, it looks like a bunch of tech billionaires playing an expensive game of Monopoly.

But here’s the thing — this is the AI race now. And understanding it could change how you think about every AI tool you use.

It’s No Longer About the Model

For a couple of years, the big competition in AI was simple: who can build the smartest model? GPT-4 vs Claude vs Gemini — a battle of benchmarks and capabilities.

That era isn’t over, but it’s no longer the whole story.

According to IBM’s chief architects, 2026 marks the point where AI models themselves are becoming a commodity. “It’s a buyer’s market,” as one IBM strategist put it — you can pick the model that fits your use case and be off to the races. The model is no longer the main differentiator. IBM

So if not the model, then what? The answer is boring — and absolutely critical.

Infrastructure.

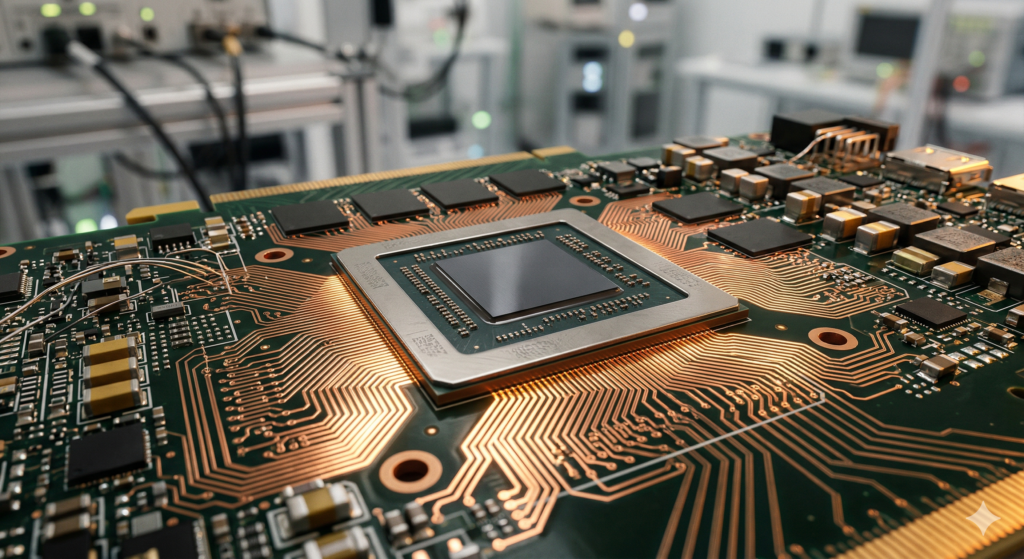

Chips. Power. Data centres. Capital. The physical backbone that makes AI run.

The Three Fronts of the AI Infrastructure War

1. The Chip Battle

Every AI model — whether it’s answering your email or running an autonomous agent — needs chips to process information. Specifically, GPUs (Graphics Processing Units), which are extraordinarily good at the kind of maths AI requires.

Right now, NVIDIA dominates this space. But that’s starting to change.

Arm, long known purely as a chip designer rather than a manufacturer, has now unveiled its own AGI CPU built specifically for AI data centres — a major strategic shift. Early customers include Meta, OpenAI, Cloudflare, and Cerebras. Tech Startups

Meanwhile, Elon Musk is moving into chip manufacturing to secure AI supply, while Micron is ramping spending to meet surging AI memory demand. WBN News

The takeaway? Everyone wants to control their own silicon. Because whoever controls the chips controls the speed — and the cost — of AI.

2. The Power Problem

Here’s something that doesn’t get talked about enough: AI is hungry. Training a frontier model can consume as much electricity as tens of thousands of homes use in a year. Running millions of queries a day isn’t cheap either.

AI-driven data centre cooling acquisitions are accelerating, next-generation AI chips are entering new development cycles, and large-scale AI factory and power infrastructure deals are emerging globally. WBN News

This is why you’re seeing AI companies quietly buying up land near power stations, signing long-term energy deals, and even exploring nuclear power. The bottleneck isn’t brains — it’s electricity.

3. The Capital Race

None of this comes cheap. And the numbers being thrown around are staggering.

NVIDIA recently announced a $2 billion investment in Nebius, a full-stack AI cloud company, as part of a strategic partnership to deploy over 5 gigawatts of NVIDIA systems by the end of 2030. NVIDIA Newsroom

OpenAI has surpassed $25 billion in annualized revenue and is reportedly taking early steps toward a public listing, potentially as soon as late 2026. Rival Anthropic is approaching $19 billion in annualized revenue. Crescendo AI

These aren’t just big numbers for the sake of it. They reflect a simple reality: building AI infrastructure at scale requires the kind of capital that only the largest players — or those backed by them — can access.

Why This Matters to You (Even If You’re Not NVIDIA)

“Okay,” you might be thinking, “this is interesting, but I’m not building data centres. Why should I care?”

Fair question. Here’s why:

The tools you use are only as good as the infrastructure behind them. When ChatGPT goes slow, when your cloud AI API hits rate limits, when costs suddenly spike — that’s the infrastructure layer showing through. As competition in this space heats up, you’ll start to see it affect pricing, availability, and which AI products survive.

The AI you can afford will shift. IDC forecasts that 70% of organisations will prioritise aligning technology investments with measurable business outcomes when considering new AI infrastructure — and by 2028, 75% of enterprise AI workloads will operate on tailor-made hybrid infrastructures. FPT Software Translation: expect more customised, cost-optimised options to emerge as the infrastructure matures.

The second wave is coming for small businesses. While AI infrastructure is tightening at the top, a second wave of AI tools is beginning to reach small-business operators directly — reshaping how companies compete, hire, and scale. WBN News

The Big Picture: A Two-Tier AI World?

Here’s the uncomfortable truth emerging from all this: AI might be splitting into two tiers.

At the top — the hyperscalers. NVIDIA, Microsoft, Google, Amazon, OpenAI, Anthropic. These companies are locking in chips, power deals, and data centre capacity years in advance. Once locked in, it’s very hard for anyone else to catch up.

At the bottom — everyone else, using the tools the top tier decides to offer, at the prices they decide to charge.

As one analysis put it, the competitive edge in 2026 is increasingly tied to who can secure chips, power, and capacity fast enough to keep shipping — making frontier AI as much an industrial-scale operations challenge as a research challenge. Tech Startups

That’s a significant shift from the early days of AI, when a small team with a good idea could genuinely compete.

What to Watch in the Next 12 Months

If you want to stay ahead, keep an eye on these developments:

| What to watch | Why it matters |

|---|---|

| NVIDIA’s next chip generation | Sets the performance ceiling for all AI tools |

| OpenAI’s IPO progress | Will signal how the market values AI infrastructure |

| Energy deals by big tech | Predicts where AI capacity will grow next |

| Open-source model quality | Open-weight models are now close to top closed models on many benchmarks ByteByteGo — could disrupt the infrastructure lock-in |

| Government AI regulation | The U.S. is pushing toward a unified national AI framework, while China’s open-source AI is gaining strategic ground WBN News |

The Bottom Line

The AI race in 2026 is being won and lost not in research labs, but in power grids, chip fabs, and boardrooms.

That doesn’t mean smaller players are out of the game. It means the game has new rules — and knowing those rules is the first step to playing smart.

Whether you’re a developer, a business owner, or just someone who uses AI tools every day, the infrastructure war will quietly shape what’s possible for you. Paying attention now means fewer surprises later.

Which part of the AI infrastructure story do you find most surprising? Drop a comment below — we’d love to know what you’re watching.

Want to go deeper? Check out our guide on [AI cloud providers compared] and [how agentic AI is changing workflows in 2026].