As artificial intelligence systems become increasingly autonomous and capable of making independent decisions, the question of how to govern them has shifted from theoretical concern to urgent business priority. AI agents—software systems that can perceive their environment, make decisions, and take actions without constant human intervention—are being deployed across industries at an unprecedented pace. Yet the governance frameworks needed to ensure these systems remain safe, transparent, and aligned with human values are still being developed. This convergence of rapid AI advancement and governance necessity represents one of the most critical challenges facing organizations today.

What Are AI Agents and Why Do They Need Governance?

AI agents represent a significant evolution in artificial intelligence. Unlike traditional AI systems that respond to specific inputs with predetermined outputs, agents can operate autonomously, learning from their environment and adjusting their behavior accordingly. They might manage supply chains, make financial decisions, coordinate complex workflows, or interact directly with customers. The autonomy that makes them valuable—their ability to handle nuanced situations without constant human oversight—is precisely what makes governance essential.

The stakes are high. When an AI agent operates in shadow—deployed without proper oversight or documentation—it creates hidden risks. A poorly governed autonomous system might make biased decisions affecting hiring, lending, or criminal justice. It could expose sensitive data, violate regulations, or create unexpected costs. Consider a supply chain optimization agent that makes purchasing decisions autonomously; without proper governance, it might prioritize cost-cutting in ways that create ethical or compliance problems. The necessity for AI agents governance isn’t about stifling innovation—it’s about enabling responsible deployment at scale.

Organizations are only beginning to understand the scope of this challenge. Many enterprises have dozens of AI agents operating across departments, but few have comprehensive governance frameworks. This gap between deployment velocity and governance maturity is where risk concentrates. The companies getting ahead are those implementing governance structures now, before widespread autonomous AI becomes impossible to manage.

Key Components of Effective AI Agents Governance

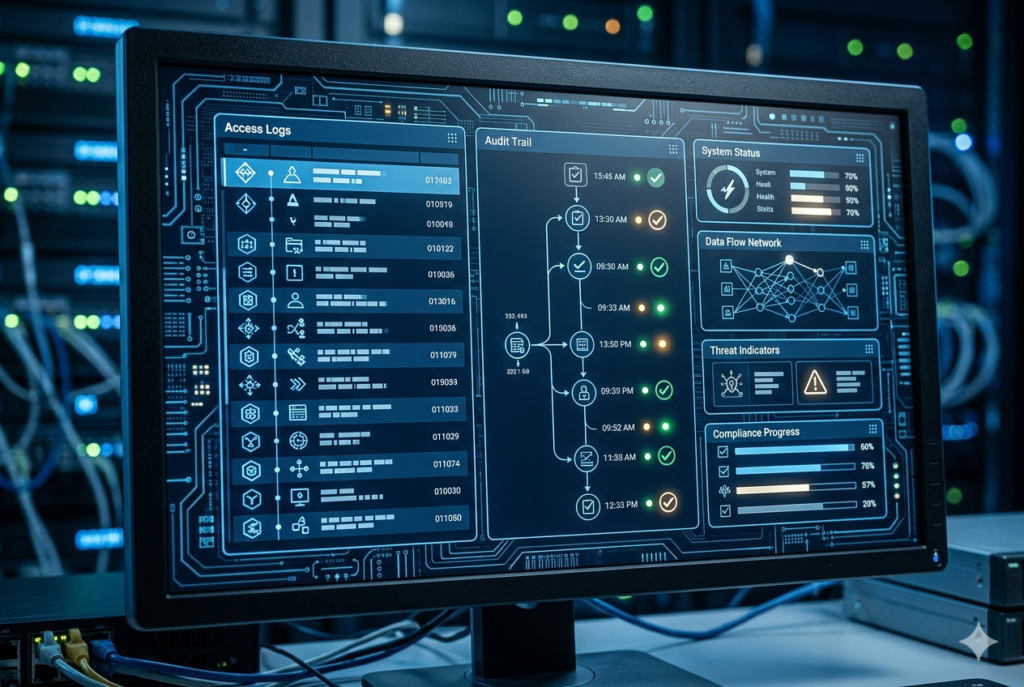

Effective governance frameworks for AI agents typically include several interconnected components. First is visibility and inventory management—organizations must know what agents they have, what they’re doing, and how they’re performing. This seems basic, but many enterprises struggle with it. Shadow AI systems operate undetected until something goes wrong. Implementing centralized registries where all autonomous systems are documented and monitored is foundational.

Second is access control and authorization frameworks. Who can deploy AI agents? Who can modify them? What approval processes exist? Third is monitoring and alerting—the ability to detect when agents behave anomalously or violate defined parameters. Fourth is audit trails and explainability, ensuring decisions made by autonomous systems can be traced, understood, and justified if challenged. Finally, there’s incident response—protocols for how to handle situations when agents malfunction, produce biased outputs, or violate organizational policy.

The most mature organizations are implementing these components in integrated systems. Tools like KiloClaw represent emerging solutions specifically designed to target shadow AI and bring autonomous agents under governance. Rather than treating AI governance as a compliance checkbox, leading companies view it as an operational necessity—similar to how mature IT organizations manage cloud infrastructure, databases, or network security. The governance framework becomes part of how you operate, not something added afterward.

Data Governance as the Foundation

Autonomous AI systems depend fundamentally on data governance. An AI agent is only as good as the data it learns from and the data it acts upon. If governance focuses only on the agent’s behavior but ignores data quality, bias, and access controls, the system remains fundamentally at risk. Data governance for AI agents means understanding data lineage, ensuring training data is representative and free from harmful bias, and controlling what data agents can access when making decisions.

This is where many governance efforts fail. Organizations implement agent monitoring but neglect the data foundations. An autonomous recruitment agent might be perfectly governed in terms of monitoring and explainability, yet still produce biased outcomes if trained on historical hiring data that reflects past discrimination. The data governance layer must address data quality, representativeness, freshness, and access controls.

The interaction between agent governance and data governance is particularly important in regulated industries. Financial institutions deploying autonomous trading agents, healthcare systems using autonomous diagnostic assistants, or government agencies implementing autonomous benefit determination systems all face the challenge of ensuring their data is governed properly. Regulators increasingly expect to see comprehensive data governance as part of AI governance frameworks, not as something separate.

Regulatory Landscape and Compliance Considerations

The regulatory environment around AI governance is evolving rapidly. The EU’s AI Act establishes classifications for AI systems based on risk and mandates governance for high-risk applications. Similar frameworks are emerging globally, with China’s Five-Year Plan establishing specific targets for AI deployment that include governance elements, and jurisdictions worldwide developing their own standards. For organizations deploying AI agents, understanding these regulatory expectations is critical.

Compliance isn’t just about meeting external requirements—it’s about managing risk. An autonomous agent that violates data privacy regulations, makes discriminatory decisions, or operates outside defined parameters creates liability for organizations. Progressive companies are implementing governance frameworks that exceed current regulatory minimums, positioning themselves ahead of inevitable tightening of standards. They understand that governance is ultimately about trust—trust with regulators, customers, employees, and the public.

The regulatory focus on governance reflects a profound insight: autonomous systems require oversight structures that traditional software doesn’t need. You might deploy an email system with minimal governance; deploying an agent that makes binding business decisions requires something far more robust. As AI agents become more capable and more widely deployed, regulatory pressure for governance will only intensify.

Building Governance into Your Organization

Implementing AI agents governance effectively requires more than technology—it requires organizational change. This means establishing clear ownership and accountability. Someone needs to be responsible for AI governance, whether that’s a Chief AI Officer, a dedicated governance team, or distributed responsibility with clear coordination mechanisms. Without clear accountability, governance becomes nobody’s job.

It requires training and culture change. Teams deploying AI agents need to understand governance requirements and why they matter. It requires investment in governance tools and platforms. And it requires governance to be part of the development process from the beginning, not an afterthought. The best organizations treat AI governance similar to security—integrated into development practices, reviewed at every stage, and continuously monitored.

Organizations should start by conducting an audit of existing AI systems, including shadow AI agents already operating. Then establish a governance framework appropriate to your risk profile and regulatory environment. Implement tools for monitoring, tracking, and controlling autonomous systems. Develop policies for approval, modification, and retirement of AI agents. Finally, establish metrics for governance effectiveness and continuously improve based on what you learn.

The Competitive Advantage of Governance

While governance might seem like a compliance burden, forward-thinking organizations see it as a competitive advantage. Companies with robust AI governance can deploy agents more confidently and at greater scale. They’re less likely to face costly incidents or regulatory action. They inspire greater customer and stakeholder trust. They can attract better talent—people want to work on AI projects that operate responsibly. And they’re better positioned as regulatory standards tighten; they’ll already be compliant while competitors scramble to catch up.

The organizations that will win in the AI economy are those that figure out how to deploy powerful autonomous systems responsibly. That requires governance. The time to implement it is now, before AI agents become so widespread that retrofitting governance becomes impossibly complex. The companies getting governance right today will be the leaders tomorrow.